How to write short surveys that people finish and give you useful data.

A Practical Guide to Surveys That Drive Real Results

You already know survey results provide feedback. The problem is sitting down to write one.

Where most surveys get stuck:

- Start without wasting time

- Ask questions that lead to decisions

- Keep it short so people actually finish

Designing a survey on top of the myriad of other responsibilities can be daunting. You may even have tried one before and gotten a handful of responses that didn't tell you much.

Let's collaborate to make this important yet challenging task a bit easier for everyone.

Whether you want to know if your members are getting value from their dues, how visitors experienced your destination, or which programs to invest in next, a well-designed survey can give you clear answers quickly.

You do not need a research degree to make a successful survey. You just need a few practical habits.

I. Start with the Decisions You Need to Make: Design the Survey Backwards

Create a survey that helps people make clear decisions.

This is the single most important step, and it happens before you write a single question. Ask yourself: what will we actually do with the answers?

Let us say your board wants to know whether members are satisfied. That is a starting point, but it is not specific enough. Think about what would change based on the results. If members say they want more social media support, would you shift resources to offer that? If they said your annual event wasn't worth the cost, would you redesign it? If a specific region of your coverage area feels ignored, would you adjust your programming?

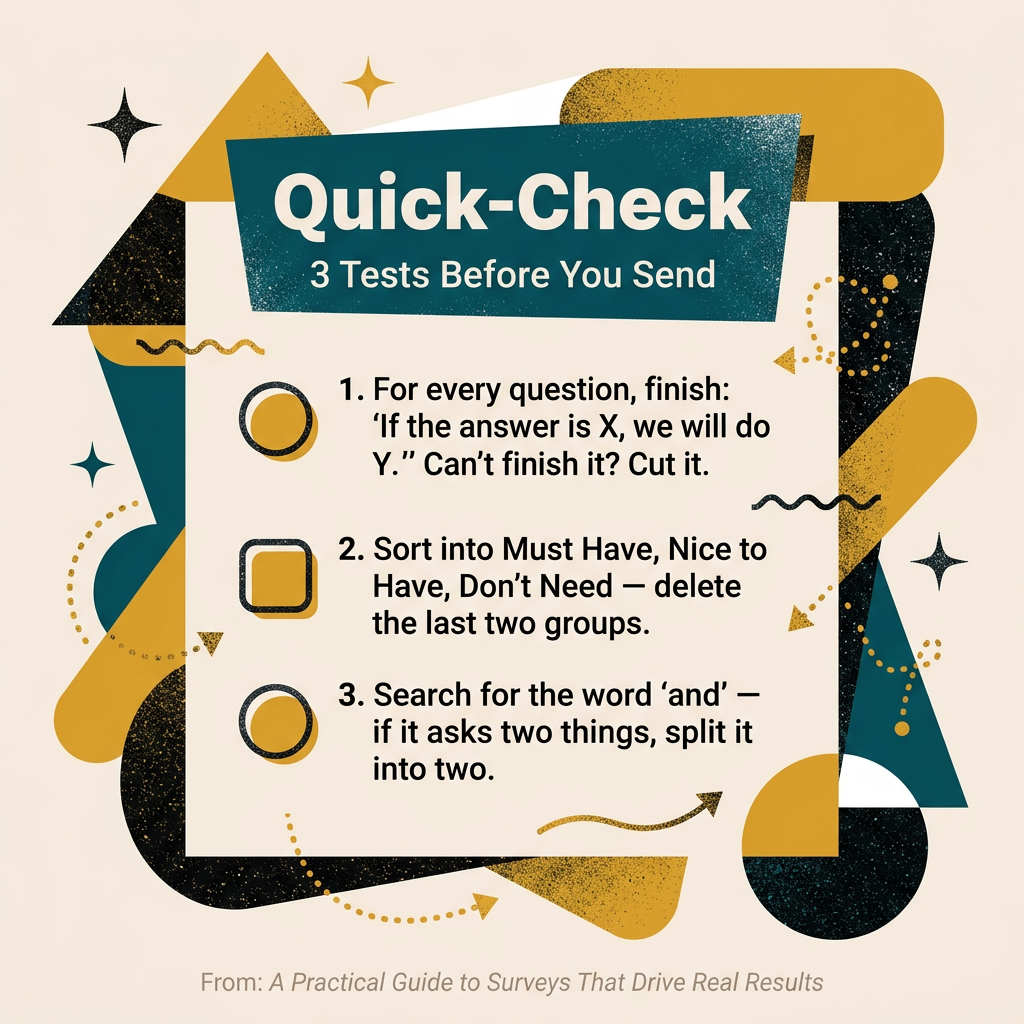

For every question you are considering, try this simple test: "If the answer is X, we will do Y. If the answer is Z, we will do W." If you cannot finish that sentence, the question probably does not belong in your survey. This practice alone — called backward design — will cut your survey length in half and double its usefulness.

II. Keep It Under Ten Minutes

Shorter surveys lead to more completions and better data.

Survey length is the biggest predictor of whether people will finish it (Brosnan et al., 2021). Every extra question you add costs you quality on every other question. People start rushing. They pick the middle option without reading. They abandon the survey entirely.

For a membership base of a few hundred people, aim for 20 to 30 questions that take about 7 to 8 minutes to complete. That is the zone where you get thoughtful answers without wearing people out.

To get there, sort your questions into three groups:

- Must have (directly tied to a decision)

- Nice to have (interesting but not essential)

- Do not need (no planned use)

Delete everything in the last two groups. It will hurt, but your data will be much better as a result.

III. Ask One Thing at a Time

Be specific

One of the most common survey mistakes is asking two questions disguised as one. For example: "How satisfied are you with the quality and timeliness of our communications?" If someone thinks the quality is great but arrives late, how should they respond? They guess, which means you cannot trust the data (Thomander et al., 2024).

This comes up a lot in membership surveys. You might be tempted to ask, "Did you find this resource valuable and easy to use?" Split it into two questions. One about value, one about ease. Each answer will mean something on its own, and you will know exactly what to fix.

When reviewing your draft, look for the word "and" in your questions. That word often signals that you are asking about two things at once.

IV. Use Real Numbers Instead of Vague Scales

Limit scales for opinions and feelings.

Here is a question I see in a lot of surveys: "How often do you use our online resources?" with options such as Never, Rarely, Sometimes, Often, Always. The problem is that "often" means once a week to one person and once a day to another.

Whenever you can ask for a specific number, do it. Instead of that vague frequency scale, try: "In the past 30 days, how many times did you visit our website?" and let them type in the number or pick from clear ranges like 0 times, 1 to 2 times, 3 to 5 times, and so on (Recanatini et al., 2000; Brosnan et al., 2021).

Save your rating scales for opinions and feelings — things like satisfaction, agreement, or likelihood of recommendation. For those, a 5-point scale with every option labeled (such as Very Dissatisfied through Very Satisfied) works well for most audiences (Toepoel et al., 2006). Just keep the direction consistent throughout your survey so people do not get confused.

V. Put the Easy Stuff First and the Sensitive Stuff Last

Establish engagement before zeroing in

The order of your questions matters more than most people realize. Research shows that earlier questions can change how people think about later ones by 10 to 30 percentage points (Tourangeau et al., 2005). That is a huge effect.

Start with broad, friendly questions that are easy to answer. These build momentum and get people invested before you ask anything that requires more thought. Group related questions together so people don't have to jump between topics. Then save your demographics and any sensitive items — like budget size, staffing, or regional identity — for the very end.

If you want the survey to feel anonymous, say so right at the top in your introduction. Something simple like: "Your answers are anonymous. Our goal is to understand how we can serve you better." That kind of transparency builds trust and gets you more honest answers (Lederer et al., 2014).

VI. Write Like You Talk

Ask like a human, not a professor (unless you are one)

Aim for an 8th-grade reading level. That is not about dumbing things down — it is about being clear. When questions use big words or complicated sentences, people do not work harder to understand them. They just guess at the meaning or skip the question entirely (Lenzner, 2011).

Replace "utilize" with "use." Replace "facilitate" with "help." Instead of "To what extent do you find that our digital communication infrastructure supports your professional objectives?" just ask, "How much do our online tools help you do your job?"

Your members are busy professionals, many of them wearing five hats at once. The clearer you write, the more respect you are showing for their time.

Clarity is critical.

VII. No Leading Questions

Stay neutral.

Many DMOs want to know whether members are aware of everything included in their membership. It is tempting to ask, "Did you know that X is part of your membership?" But that kind of question leads people toward a "yes," even if they do not actually know (Krosnick, 2018).

A better approach: list several items — some included with membership and a couple not — and ask respondents to select all those they believe are part of their membership. This reveals what people actually know without tipping them off.

Keep the list to five to seven items so it does not feel overwhelming (Brosnan et al., 2021).

VIII. Test It Before You Send It

Have someone review your survey

Before you send your survey to your full membership, have five to ten people take it while you watch — or at least have them tell you what confused them. This is called cognitive testing, and research shows it catches 60 to 80 percent of problems that you will never spot on your own (Krosnick, 2018).

Give it to someone who will be candid with you. Someone who will say, "I did not understand question four" or "I did not know what you meant by co-op program." That honest feedback is more valuable than a polished survey that nobody understands. And when your leadership suggests changes, you will have a reason behind each design choice rather than just a gut feeling.

IX. Get People to Actually Respond

- Offer Incentives

- Set clear deadlines

- Deploy wisely

- Be transparent about your intent

You have written a great survey. Now you need people to take it. Here are a few things that make a real difference:

Offer a small incentive and mention it upfront. Even a $5 gift card can boost your response rate by 10 to 30 percentage points (Berk). But the key is to tell people about it in the email subject line or the first sentence of your invitation — not bury it at the end of the survey.

Set a clear deadline. Two weeks is a good window. Most of your responses will come in the first 24 to 48 hours. If people are going to respond, they will do it quickly. The deadline just gives stragglers a reason to act.

Send it on a Tuesday, Wednesday, or Thursday. Mondays are hectic and Fridays are wind-down days. Midweek tends to yield the best response rates among professional audiences.

Tell people what you will do with their answers. "Last year, your feedback led us to launch a new social media toolkit" is far more motivating than "Please take our survey" (Berk). When people believe their input will lead to real change, they invest more effort in their answers.

After the Data Comes In

Once you have responses, share what you learned. This is the part most organizations skip, and it is a mistake. When you tell your members, "Here is what you said, and here is what we are doing about it," you build the kind of trust that makes your next survey even more successful.

A quick note on sample size: if your membership is around 300 people, aim for at least 60 responses before making any big decisions. That is the point where your numbers start to become reliable. If you can get 100 or more, even better. But anything above 60 gives you a solid foundation to work from.

And remember — your first survey does not have to be perfect. It is more important to have something out there than to wait for the ideal version that never launches. Write it, test it, trim it, send it. Then learn from what works and improve next time.

X. References

Berk, R. A. "Top 20 Strategies to Increase the Online Response Rates of Student Rating Scales."

Brosnan, K. et al. (2021). "Cognitive Load Reduction Strategies in Questionnaire Design." International Journal of Market Research. https://doi.org/10.1177/1470785320986797

Krosnick, J. A. (2018). "Improving Question Design to Maximize Reliability and Validity." https://doi.org/10.1007/978-3-319-54395-6_13

Lederer, A. M. et al. (2014). "How to Get the Information You Want: Best Practices for Survey Design and Implementation for Health Promotion Practitioners."

Lenzner, T. (2011). "A Psycholinguistic Look at Survey Question Design and Response Quality."

Recanatini et al. (2000). "Surveying Surveys and Questioning Questions — Learning from World Bank Experience." Research Papers in Economics.

Thomander, R. et al. (2024). "Question and Questionnaire Design." https://doi.org/10.1017/9781009000796.017

Toepoel, V. et al. (2006). "Design of Web Questionnaires: An Information Processing Perspective for the Effect of Response Categories." https://doi.org/10.2139/SSRN.895465

Tourangeau, R. et al. (2005). "Survey Questionnaire Design." https://doi.org/10.1002/0470013192.BSA660